entropy(iris$Species) # 1.584963Īs expected, we now see non-zero entropy. We can use our entropy function again to see this. If you imagine a bucket filled with differently coloured balls representing the different species of Iris, we now have less knowledge about what colour of ball (what species) we would draw at random from the bucket. Therefore, we’d expect a higher entropy if we include the complete data. However, in the iris dataset we actually have 3 species of Iris (Setosa, Versicolor, Virginica), each representing 1/3 of the data. If we were asked to draw one observation at random and predict the species, we know we’d draw a Setosa.

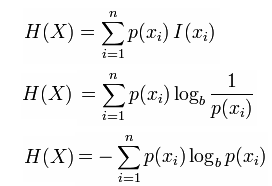

Suppose we took a subset of the iris data containing only the Setosa species: setosa_subset 0]Īs expected, we see that our entropy is indeed 0, indicating that we have complete knowledge of the contents of this set. Where \(\ p_i\) is the probability of value \(\ i\) and \(\ n\) is the number of possible values. In short, entropy provides a measure of purity. For an intuitive, detailed account (and an intuitive derivation of the formula below), check out Shannon Entropy, Information Gain, and Picking Balls from Buckets. In fact, you can think of Entropy as having a perfect inverse relationship with knowledge, where the more knowledge we have, the lower the entropy. By “knowledge” here I mean how certain we are of what we would draw at random from the set. Which of these 4 features provides the “purest” segmentation with respect to Species? Or to put it differently, if you were to place a bet on the correct species, and could only ask for the value of 1 measurement, which one would give you the greatest likelihood of winning your bet?įor starters, let’s define what we mean by Entropy and Information Gain.Įntropy, as it pertains to information theory, tells us something about the amount of knowledge we have about a given set of things (in this case, our set of things would the Species of Iris). # Sepal.Length Sepal.Width Petal.Length Petal.Width Species

To keep things simple, we’ll explore the Iris dataset (measurements in centimeters for 3 species of iris). In this post I’ll talk a bit about how to use Shannon Entropy and Information Gain to help with this. You may also be interested in exploring which variables provide the most information about whether or not a customer has churned. Suppose you’re exploring a new dataset on customer churn. Entropy, Information Gain, and Data Exploration in R Philippe Jette Jan 2nd, 2019Įxploring a new dataset is all about generally getting to know your surroundings, understanding the data structure, understanding ranges and distributions, and getting a sense of patterns and relationships.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed